![[Home]](http://www.bayleshanks.com/cartoonbayle.png)

Table of Contents for Programming Languages: a survey

In this book we'll focus on instruction sets, addressing modes, etc, rather than on other aspects of processors such as pipelining.

NOTE: regarding this and all of the chapters about assembly language/ISAs, the author has only programmed using 6502 assembly! Everything else here should be taken with a grain of salt.

Some common features of things that tend to be called 'assembly languages', 'instruction set architectures' and 'machine code'. These are observations, not definitions, and they are not universally true across such languages.

Values are represented as sequences of bits. This makes sense when you think about how discrete information is stored in hardware. There are many idiosyncratic ways that information could be represented in various hardware systems, but one common pattern is to have some quantity (such as voltage) associated with some location or part of the physical world, and to have that quantity represent a discrete value within the system. Perhaps the simplest way to do this is to map different ranges of the physical quantity to different discrete values within the system. For example: when the value of that quantity is below some fixed constant level X, the quantity is 'LOW' and represents a bit of '0', but when the value is above X, the quantity is 'HIGH' and this represents '1'.

If this sort of representation is adopted, one can see that in theory, it could be extended to trinary or higher-radix representations, but this means dividing the range of physical values into more partitions. In order to pack a large amount of information storage into a small space, and in order to use less energy and produce less heat, you can imagine that it might often be desirable for the range of physical values to be made as small as possible, until you get close to the noise limits of the measuring apparatus. You can see that in this sort of situation, the simplest thing to engineer is probably just to keep to binary where the range of physical values only has two partitions, LOW and HIGH; then engineer the system so that there is in practice a gap in the middle that you try to stay out of, so that, as long as the noise is much smaller than the gap, you avoid errors.

Hence, assembly languages represent things in terms of bits.

When we talk about numbers, we understand that a number can have arbitrary many digits. But when we compute with numbers, we have to build physical devices that look at bits and take different actions depending on what the values of those bits are. At some point the rubber has to meet the road, and something in hardware has to be constructed that can only 'look at' a fixed maximum number of bits at one time.

Hence, assembly languages represent both instructions and data in fixed-size (or at least fixed-maximum-size) chunks. Numbers are not represented as arbitrarily long strings of digits; they are represented as a fixed number of bits (which can only encode numbers up to some highest-encodable-number). Instructions and their arguments are not represented as strings of characters or as expressions of arbitrary length and depth; they are represented as fields of bits of a fixed-size (or at least a fixed-maximum-size).

The structure of assembly language memory is a linear array of memory locations.

In higher-level languages, memory locations are named by variables.

In assembly languages, the values in memory are 'named' by integers. The memory is like a long row of (mostly) identical storage lockers. If you think about it, this is one of the simplest ways to do it; the person building the hardware doesn't know what each memory location will be used for, but e knows that a lot of memory locations will be needed. So what else can they do but provide a lot of memory locations, indexed by number?

If memory locations are a linear array, then it makes sense that the simplest way to structure programs is a linear array of instructions.

This contrasts with higher-level languages in which programs are often structured as trees of elements such as expressions and blocks. If a language defines programs in terms of trees, at some point those trees have to somehow be mapped onto the linear array of memory provided by the hardware. So, when designing an assembly language, the simplest thing to do is get rid of the trees altogether, and just represent the program as a sequence of instructions.

This loosely follows from the principal of memory as a linear array.

In high-level languages, variables and/or the values in variables often have a type, and different operations are applicable to different types.

But the values in memory themselves are just bits in storage locations. The storage locations themselves are general-purpose; the hardware designer didn't know how many numbers and how many strings (for example) would need to be stored, so they just made memory storage that stores bits, and left it up to the software programmers to decide whether those bits are to be interpreted as numbers or strings.

In assembly languages, the values in storage locations are ultimately just bit patterns. For example, a programmer can access the bit pattern in a storage location as if represents an integer at one moment, and then a moment later access the same bit pattern in the same storage location as if it represents a character in a string.

This loosely follows from the principal of memory as a linear array.

In assembly languages, pointers are just integers, namely, they are the integer indices into the linear array of memory.

This loosely follows from the principals of memory as a linear array, and untyped data. It is just a special case of the above principals, but it is an important one.

Any system for universal computation must provide a way to branch, that is, to conditionally execute subprogram (A) in one situation, or subprogram (B) in a different situation, and also to loop, that is, to re-execute subprogram (C) over and over again as long as some predicate on the state remains true.

If the program is just a sequence of instructions, then a reference to the currently-executing instruction takes the form of an integer index into that sequence, and as computation progresses to the next instruction, that index increases. This index is called the 'program counter' (PC) or 'instruction pointer' (IP). You can see that in a system in which memory locations are a linear array, and programs are sequences of instructions within that array, perhaps the simplest way to provide a facility for branching and looping is to provide GOTO instructions which can directly set the PC to another value.

This loosely follows from the principal of programs as a linear array of instructions.

The code is, at least metaphorically, stored in specific memory locations (possibly in a separate code memory; and possibly the program may not be able to access code memory).

If one conceptualizes the program counter as a tape head moving over a tape, or if one conceptualizes a thread of control as a physical object or as a path of fluid flow, one might worry that when two threads of control intersect they might get in each other's way (for example, when subprogram A goes from locations 1 to 3 but then jumps to location 6, but subprogram B goes from locations 4 to 6). However, in assembly language, this is not the case; the GOTO instructions can set the PC to any value (within the region of currently-accessible program memory), not just to adjacent or nearby values. The PC can 'jump over' an intervening section of program code with no additional effort. For this reason, the instructions to set the PC are sometimes called 'jumps'.

Whether via registers or a stack, there are only a relatively small number of data storage locations which can be the arguments for operations on data.

todo: some old stuff, need to figure out what i was talking about here: "Close to machine language (bung a 20-bit address, but by using a 16-bit address and 4-bit index. This is the root of the 64k segment size problem that dogs DOS to this day."

Since DOS only ran in 8086 mode there was no easy way to address more than one meg of memory, and various standards were set up to allow access to addresses beyond the 1 meg barrier. The bug in the '286 was useful in that it allowed 8086 mode programs to see the first 64k above 1 meg. It involved some weird messing about with the keyboard controller to toggle the state of the 21st address line, and this is why you still see on some syt not exactly; most assembly languages allow the programmer to define alphanumeric labels for code positions and alphanumeric variable names)"

Linear imperative sequence of opcodes; statements, not expressions No assignment operator to assign to a variable; the 'alphanumeric variable names' mentioned above just map to a single memory location Registers or stack separate from memory Condition flags Untyped Goto and bne style control flow Addressing modes at least 3: immediate, register (or memory), indirect (tho see Parallax Propeller which uses self-modifying code in lieu of indirect) operations on fixed width data (e.g. "assume these memory locations contain 8-bit ints and add them" or "assume these memory locations contain 32-bit floats and add them") sometimes macros: for generating inline, as opposed to called, subroutines

For more inspiration about the sorts of instructions that might go into a VM, one might look at popular CPU instruction sets.

My purpose in including this section is NOT to teach the reader the basics of assembly language and computer architecture; i assume that the reader already knows that. I just want to give you more food for thought about 'minimal' programming languages.

Links:

the winner was: general purpose registers

From http://www.cl.cam.ac.uk/teaching/0405/CompArch/mynotes.pdf :

Classic RISC Addressing Modes:

Less RISCy addr modes (ARM and PowerPC?):

CISC Addressing Modes:

Links:

regularity vs code density:

" Instruction types

Examples of operations common to many instruction sets include: Data handling and memory operations

Set a register to a fixed constant value.

Move data from a memory location to a register, or vice versa. Used to store the contents of a register, result of a computation, or to retrieve stored data to perform a computation on it later.

Read and write data from hardware devices.Arithmetic and logic operations

Add, subtract, multiply, or divide the values of two registers, placing the result in a register, possibly setting one or more condition codes in a status register.

Perform bitwise operations, e.g., taking the conjunction and disjunction of corresponding bits in a pair of registers, taking the negation of each bit in a register.

Compare two values in registers (for example, to see if one is less, or if they are equal).Control flow operations

Branch to another location in the program and execute instructions there.

Conditionally branch to another location if a certain condition holds.

Indirectly branch to another location, while saving the location of the next instruction as a point to return to (a call).Complex instructions

CISC processors include "complex" instructions in their instruction set. A single "complex" instruction does something that may take many instructions on other computers.[citation needed] Such instructions are typified by instructions that take multiple steps, control multiple functional units, or otherwise appear on a larger scale than the bulk of simple instructions implemented by the given processor. Some examples of "complex" instructions include:

Saving many registers on the stack at once.

Moving large blocks of memory.

complicated integer and floating-point arithmetic (sine, cosine, square root, etc.).

SIMD instructions, a single instruction performing an operation on many values in parallel.

Performing an atomic test-and-set instruction or other read-modify-write atomic instruction.

Instructions that perform ALU operations with an operand from memory rather than a register.A complex instruction type that has become particularly popular recently[citation needed] is the SIMD or Single-Instruction Stream Multiple-Data Stream operation or vector instruction, that is an operation that performs the same arithmetic operation on multiple pieces of data at the same time. SIMD have the ability of manipulating large vectors and matrices in minimal time. SIMD instructions allow easy parallelization of algorithms commonly involved in sound, image, and video processing. Various SIMD implementations have been brought to market under trade names such as MMX, 3DNow! and AltiVec?.

Specialised processor types like GPUs for example also provide complex instruction sets. Nonetheless many of these specialised processor complex instruction sets do not have a publicly available native instruction set and native assembly language for proprietary hardware related reasons and are usually only accessible to software developers through standardized higher level languages and APIs. The OpenGL? virtual instruction set and virtual assembly language ARB assembly language and CUDA are examples of such hardware abstraction layers on top of the specialised processor native instruction set. " -- http://en.wikipedia.org/wiki/Instruction_set

Why not just have many thousands or millions of registers?

There are two obvious reasons. First, fast memory tends to cost more money, so a CPU with a ton of registers would cost more (and probably require more power, space, etc). Second, the more registers you have, the slower register access has to be (because the more registers you have, the more space must be traversed to get to the average register, and the speed of light is fixed).

There are also two more subtle reasons. First, if you have more registers, it takes more bits to identify a register, bloating the instruction encoding and decreasing code density (which in turns makes a smaller proportion of a program fit in cache) (note: this disadvantage does not apply to hidden registers). Second, if you have more registers, then you have more registers to save and load during interrupts, or procedure calls (to the extent the calling conventions do not allow unused registers to be skipped, or to the extent that a simpler compiler is not bothering to track register use lifetimes).

Weighted against these costs, we must consider the benefits of more registers. But there are diminishing returns to having more registers; most of the time, code uses only a few registers, and could not make use of more even if available. Approximately 12 registers are sufficient approximately 95% of the time [2].

For compactness of instruction encoding, sometimes the number of registers accessible by program code is smaller than the actual number of registers available in hardware. How can these hidden registers be profitably used?

In this case, to make use of the hidden registers, the hardware sometimes re-interprets the code to place values into different registers even if they were directed to be placed in the same register at different times, when this is safe and when it allows the hardware to parallelize more.

For example, if the assembly code says "r1 = 3; (..do something with r1..); r1 = 5; (..do something else with r1..);", and where the 'something else' could otherwise be done in parallel with the 'something', the hardware might rename the second instance of r1 to a hidden register and then execute these things in parallel, as if the assembly code had said "r1 = 3; (..do something with r1..); r321 = 5; (..do something else with r321..);". Note that even though the register r1 has been renamed to r321 in some of this code, the semantics are unchanged.

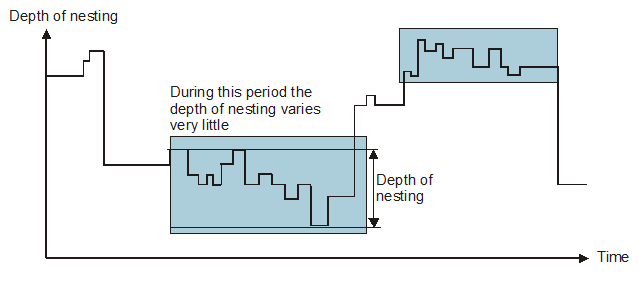

Some early RISC processors (that seems not to have caught on?) had a scheme to try and make it faster to call subroutines by using registers more in place of the stack. The idea was that instead of putting parameters on the stack, you would put them in registers; you could also save some local variables in registers. This was done by having many registers but only having some of them active at any one time, where the 'register window' was the active registers; the 'windows' would overlap, allowing some registers to be used for parameter passing and return. So, if function A called function B, then it would store the passed parameters in the overlapping part of the register window. When B was called, the register window would shift, and B would have access to the past parameters in some registers, while other registers (different from the ones A was using) would be available for B's use. When it returned control to A, it would leave the return values in the overlapping part of the window, and then the window would shift back to what it was before B was called. Now the registers that A was using locally haven't been touched, so A can continue on as it was doing. Note that no time has been spent copying out registers to the stack or saving and loading parameters to/from the stack.

The obvious issue here is that there are relatively few registers, so if function calls are nested deep enough you'll run out. At this point you have to spill registers to the stack (usually the oldest register window is moved to the stack). This means that you are only saving time when the program is going down a few levels and then back up a few levels many times over; when the program is going up many levels the stack is essentially being used as normal:

Another issue is that register windows make register renaming more difficult to implement.

Links:

" A shift operation moves a string of bits by one or more positions left or right. The difference between shift operations depends on:

the direction of the shift (left or right),

the number of shifts – one or more places,

dynamic/static shifts (a dynamic shift permits the number of places shifted to be changed at run-time by using a variable in a register),

the type of shift – arithmetic (preserves the sign), logical, circular (the bit shifted out at one end is shifted in at the other end), extended (the shift takes place through the carry bit to allow multiple-precision arithmetic)." -- http://alanclements.org/armsforpoor.html"In principal there are 16 types of shift operation (arithmetic, logical, circular, and circular through carry, x2 for left/right x2 for static and dynamic). Most processors don’t provide this full set (although the 68K CISC comes close to it). Indeed, it’s not necessary because you can synthesize one shift from another." -- http://alanclements.org/mips.html

https://en.wikipedia.org/wiki/Bitwise_operation#Bit_shifts

todo this is comparing both assembly types of access with HLL too, move it

you need a way to access main memory. some ways are:

what am i talking about here and what are we trying to do? maybe something like symlinks?: if u want something like symlinks, you need a way to identify a destination; an address. and you need a way to pass between addresses and values at that address.

todo: describe the distinction between {copying, passing, or comparing} a referencee (Python) and a value (immutable data langs) (eg the comparing distinction is structural vs pointer equality; the passing one is call-by-ref vs call-by-value; the copying one is shallow copy of 1 pointer vs deep copy (or COW, which is like a virtual deep copy))

Python's system is more readable (you dont have to constantly think about the pointer as a separate thing from the obj) but has more gotchas, esp. when it also has unboxed values (eg a gotcha:

x = 9; y = x; y = 8; (x == y) == False

but

x = [9]; y = x; y[0] = 8; (x == y) == True )

(is Python's eq pointer or structural eq? i think struct but can it do ptr too? todo check)

todo: lookup C++'s clone (copy?), eq, what was the third one? how does this fit in? doesnt python's system give u those 4 free? oh here it is: https://en.wikipedia.org/wiki/Rule_of_three_%28C%2B%2B_programming%29

an idea: what python's system gives you, you can have local variable represneting structs that you address directly like 'x', not like '*x', but you can also alias two locals togeether, eg changing x can change y.

note: immediate mode corresponds to literals in an HLL

todo wrong lookup:

instead of offering an immediate addressing mode , an ISA can allow code rewriting, actually dynamically changing the value in code that is about to be executed

todo: parallax propeller did this, right? look that up (or was it register or was it indirect? i forgot which mode it was, look it up)

---

i guess the word for the thing corresponding to an opcode is not 'instruction' but 'operation' or 'op'; the 'instruction' is an instance of op+arguments found in a program, the 'op' is the thing that the opcode enumerates/identifies/represents.

---

random links relating to x86-style ISA support for stacks:

---

elements of a computer:

todo

Calling conventions associated with popular 64-bit architectures: "Natively, x86_64 passes first 6 arguments in registers, aarch64/ sparcv9/mips64 have 7 - 8 registers for arguments; x86_64 has 6 callee saved registers, and aarch64/sparcv9/mips64 have 11 or more callee saved registers." -- [8]

---

" Due to the large number of bits needed to encode the three registers of a 3-operand instruction, RISC processors using 16-bit instructions are invariably 2-operand machines, such as the Atmel AVR, the TI MSP430, and some versions of the ARM Thumb. RISC processors using 32-bit instructions are usually 3-operand machines, such as processors implementing the Power Architecture, the SPARC architecture, the MIPS architecture, the ARM architecture, and the AVR32 architecture. " [9]

---

"VAX has been perceived as the quintessential CISC ISA, with its very large number of assembly-language-programmer-friendly addressing modes and machine instructions, highly orthogonal architecture, and instructions for complex operations such as queue insertion or deletion and polynomial evaluation.[1]" [10]

---

---

Leaving out too much: No load/store byte or load/store half word in the initial Alpha ISA, and no floating-point load/store double in MIPS I.

Including too much: The shift option for ARM instructions and register windows in SPARC.

Allowing current microarchitectural designs to affect the ISA: Delayed branch in MIPS and SPARC, and floating-point trap barriers in Alpha.To match embedded-market needs, RISC architectures even developed solutions to the code-size issue: ARM Thumb and MIPS16 added 16-bit formats to offer code smaller than x86. Thus, we believe there is widespread agreement on the general outline of a good RISC ISA." -- [11] or [12]

todo: cover:

---

some GPU stuff: " Memory

Memory on modern GPU is broken up into 3 main categories: global memory, shared memory, and registers. Global memory is the GDDR memory that’s advertised on the box of the GPU and is typically around 2-12GB in size, and has a throughput of 300-400GB/s. Global memory can be accessed by all threads across all SMs on the processor, and is also the slowest type of memory on the card. Shared memory is, as the name says, memory that’s shared between all threads within the same SM. It is usually at least twice as fast as global memory, but is not accessible between threads on different SMs. Registers are much like registers on a CPU in that they are the fastest way to access data on a GPU, but they are local per thread and the data is not visible to any other running thread. Both shared memory and global memory have very strict rules on how they can be accessed, with severe performance penalties for not following them. To reach the throughputs mentioned above, memory accesses must be completely coalesced between threads within the same thread group. Similar to a CPU reading into a single cache line, GPUs have cache lines sized so that a single access can serve all threads in a group if aligned properly. However, in the worst case where all threads in a group access memory in a different cache line, a separate memory read will be required for each thread. This usually means that most of the data in the cache line is not used by the thread, and the useable throughput of the memory goes down. A similar rule applies to shared memory as well, with a couple exceptions that we won’t cover here. Threading Model

GPU threads run in a SIMT (Single Instruction Multiple Thread) fashion, and each thread runs in a group with a pre-defined size in the hardware (typically 32). That last part has many implications; every thread in that group must be working on the same instruction at the same time. If any of the threads in a group need to take a divergent path (an if statement, for example) of code from the others, all threads not part of the branch suspend execution until the branch is complete. As a trivial example:

if (threadId < 5) { Do something } Do More

In the code above, this branch would cause 27 of our 32 threads in the group to suspend execution until the branch is complete. You can imagine if many groups of threads all run this code, the overall performance will take a large hit while most of the cores sit idle. Only when an entire group of threads is stalled is the hardware allowed to swap in another group to run on those cores. " -- [13]

---

the difference between superscalar and out-of-order and speculation:

https://www.raspberrypi.org/blog/why-raspberry-pi-isnt-vulnerable-to-spectre-or-meltdown/

---

One way to look at the purpose of an assembly or machine language is that at some level the physical implementation of a computer has to be finite, therefore at some level the language of programs must be composed of fundamental units with a finite upper bound on their length. For example, allowing literally any number of variables would mean that the NAMES of variables must be of unbounded length. That is not in itself a problem, but in order to process such names, the physical implementation, since it is composed of finite pieces, must process the names incrementally; which means that the computation can be described in terms of a simpler set of operations that does not involve names of unbounded length. This is one explanation for why in assembly or machine language we deal either with a fixed number of registers, or with a stack and primitives that can only reach a certain distance into the stack in one step, instead of an unbounded number of variables.

Furthermore, we don't want the finite upper bound for the length of fundamental pieces of the language to be too large, because this relates to the efficiency of the implementation. Therefore, assembly language for register machines have numbers of registers on the order of 4 to 256, rather than offering, say, 64k registers.

(although you do see things like LLVM, which is assembly-like in some ways while offering a large number of registers, but LLVM is a be slightly higher level language that compiles down to, or is interpreted by an interpreter executing in, a 'real' assembly language)

-- me

---

"Keeping the addressing mode specifier bits separate from the opcode operation bits produces an orthogonal instruction set." [14]

---

note that most assembly languages can't easily be COMPILED to another language, because most assembly language have some mechanism for dynamically computing jump targets. These jump targets refer to program locations in the source assembly language program. Compiled code would have to know how to translate a computed jump target (expressed as a location in the source assembly language program) into a program location in the compiled program. In theory the compiled program could contain a data structure explicitly mapping every program location in the source program into a corresponding program location in the compiled program, but in some cases this could approximately double the size of the program.

A common technique to deal with this issue in assembly-like languages that are designed to be compiled is to require every indirect jump to either (a) only jump to targets whose source was a 'snapshot' of the PC at some past moment of program execution (for example, when making a function call, the caller pushes the current value of the PC onto the stack as a return address; then upon completion, the called function pops the stack and jumps to that address), or (b) be associated with a list of all potential jump targets for that particular indirect jump (for example, one indirect jump might index into a jump table), or (c) use provided higher-level primitives for common types of indirect jumps (for example, indexing into a jump table), or (d) require that the indirect jump not jump to any location, but only to labeled locations. For case (a), the compiler doesn't have to do anything extra, because the location being stored is alread a program location in the COMPILED code rather than the source code; for case (b) the compiler could make a small data structure mapping the locations of only the potential jump targets from source to compiled code; case (c) is like case (b) but probably more efficient (for example, in the case of a jump table, instead of blinding making a data structure mapping the program location of each item in the jump table from source to target, the compiler could just make a jump table in the target, although the compiler does still have to do the work to add the facility for jump table creation); case (d) may still require the compiler to make a giant source-to-target program location correspondence table, but only the labeled locations in the program source need to be included in this table.

---

intro link:

https://chortle.ccsu.edu/AssemblyTutorial/

---

book about assemblers:

http://www.davidsalomon.name/assem.advertis/asl.pdf

---

RISC vs CISC:

---

random posts on register renaming:

---

random posts on register windows:

---

some principals:

not sure how to phrase this but another principal:

think about if you had a number of stacks of various but staticly knowable sizes. They are all stored within the memory stack within the current stack frame, and the layout of the current stack frame is staticly knowable. You want to read or write "item #3 in stack #5". Would something that allowed items to be referenced in that way be an assembly language?

Think about how the addressing would work. Unless stack #5 is the first or last stack within the current stack frame, in order to know the offset between the top of the stack and the item you want to reference you must know the length of all of the other items in between the top of the stack and stack #5. This information is staticly knowable, but it's not embeddable within a single instruction as an immediate; a compiler/assembler would have to look at a bunch of data declarations somewhere else, and sum up their sizes.

The assembler would probably precompute this by adding up those sizes and generating a single offset which is the distance in bytes between the top of the stack and "item #3 in stack #5". And then it would emit an assembly instruction containing that offset as an immediate constant.

I argue that this emitted instruction is assembly, but the original "item #3 in stack #5" isn't (it could be thought of as some kind of 'high level assembly' or 'macroassembly' but not assembly proper. Not sure but i think the reason is that non-trivial non-local computation must be done on the source code in order to precompute the immediate constant in the compiled instruction.

now i suppose another way to accomplish this would be to store those staticly known sizes in register(s), and then sum them at runtime. but this seems somehow like too much computation just to decode an operand.

---

calling conventions, stack frames, activation records:

---

how much ILP (instruction-level parallelism) is available in typical programs?

Links:

https://en.wikipedia.org/wiki/Explicit_data_graph_execution

" TRIPS instructions do not encode their source operands, as in a RISC or CISC architecture. Instead, they produce values and specify where the architecture must route them in the ALU array. For example, a RISC ADD instruction adds the values in R2 and R3, and places the result in R1:

ADD R1, R2, R3

The equivalent TRIPS instruction specifies only the targets of the add, not the source operands:

ADD T1, T2

where T1 and T2 are the physical locations (assigned by the compiler) of two instructions dependent on the result of the add, which would have read the result in R1 in the RISC example above. Each instruction thus sends its values to the consumers, and instructions fire as soon as all of their operands arrive. "

-- https://www.cs.utexas.edu/users/cart/trips/publications/computer04.pdf

CASH/Pegasus:

---

"The rule of thumb for C/C++ code is that you get, on average, one branch per 7 instructions...This means that the most parallelism a compiler can easily extract on common workloads is among those 7 instructions." -- David Chisnall

---

---

"Multiported register files (especially multiple write ports) increase complexity and therefore slow down register access." -- https://bernd-paysan.de/4stack.pdf

--- Links: